26 min read Hugues Orgitello EN

Hardware technical due diligence: an investor's field guide

Hardware technical due diligence: 5-pillar framework, TRL 1-9, CE/RED/CRA red flags, BOM and obsolescence risks. Investor audit by AESTECHNO Montpellier.

Hardware Technical Due Diligence (TDD) is an independent assessment of a startup's Technology Readiness Level (TRL), CE/RED/CRA compliance trajectory, Bill of Materials (BOM) resilience and industrial executability before an investment round closes. Contrary to a financial DD, hardware red flags rarely surface in the pitch deck. They demand a senior engineer, a schematic and physical measurements.

At AESTECHNO, an electronic design house based in Montpellier, France, we support investors with this critical evaluation as an independent expert. This guide walks through our framework, the recurring pitfalls we have observed in audit, and the discriminating questions an investor should ask before wiring funds into a hardware project. Despite the apparent maturity of many decks, the gap between claim and observation is often the first finding we report.

Bottom line: 6 signals to capture in 10 days of audit

- Claimed vs observed TRL gap: according to Gartner and the ISO 16290 framework, a gap of 2 levels or more signals a full-redesign risk. According to Bureau Veritas, 40 percent of audited projects overstate their TRL.

- Documented CE/RED/CRA path: per SGS, a complete Radio Equipment Directive (RED) cycle takes 3 to 6 months in a notified laboratory. The absence of a pre-compliance plan is the single most expensive red flag we encounter.

- BOM resilience: according to Icon Group and SiliconExpert analyses, second-source coverage below 80 percent or a Not Recommended for New Designs (NRND) ratio above 10 percent on critical parts will block volume ramp.

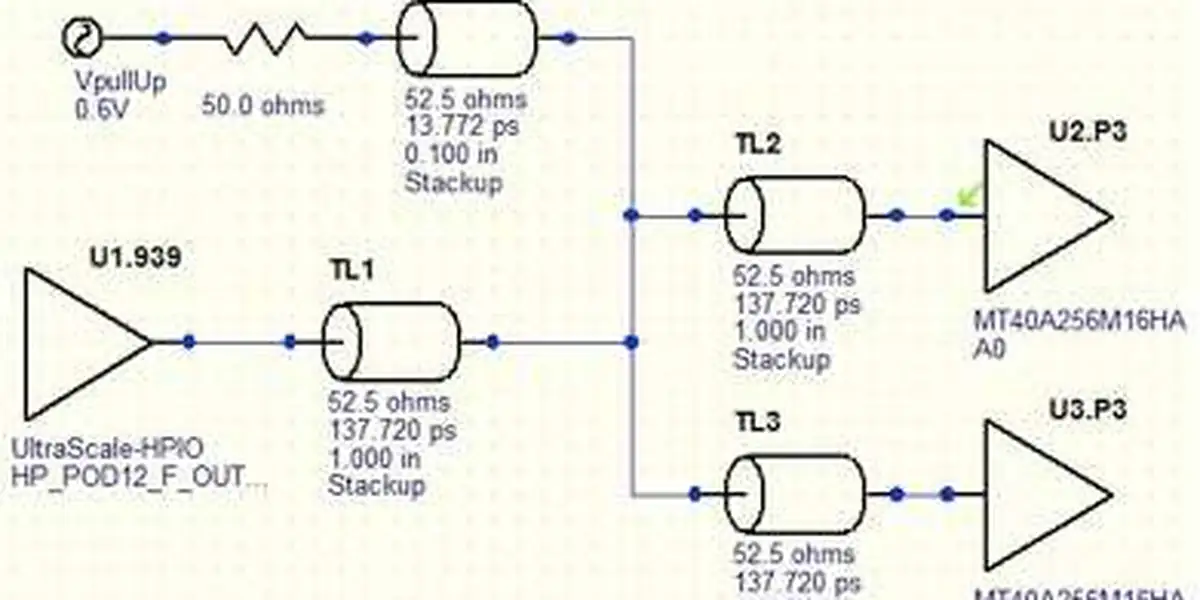

- PCB and assembly quality: IPC-A-610 Class 3 for medical or aerospace, Class 2 for industrial. According to Intertek, an uncontrolled-impedance stack-up causes 60 percent of EMC failures on first pass at the notified lab.

- Business margins: Non-Recurring Engineering (NRE), Average Selling Price (ASP), Gross Margin (GM) and Total Addressable Market (TAM) must be coherent. According to KPMG and Deloitte, that triplet predicts execution risk better than the product pitch.

- Reliability target: Mean Time Between Failures (MTBF) above 50,000 hours, validated by Environmental Stress Screening (ESS) and HALT cycling. Per McKinsey, these metrics drive industrial valuation.

Contents

- Why hardware technical due diligence is non-negotiable

- A 5-pillar evaluation framework

- The classic technical pitfalls

- Our hardware DD process

- 10 discriminating questions for an investor

- Field reports and patterns we keep seeing

- How long does a hardware DD take?

- Bottom line

- FAQ

Why hardware technical due diligence is non-negotiable

Hardware technical due diligence is an independent assessment of feasibility, maturity and execution risk on an electronics project before capital is committed. Unlike software, hardware ships with physical, regulatory and industrial constraints that make valuation mistakes both costly and slow to repair after closing. According to PwC, a single mis-scoped certification path can defer revenue by 6 to 18 months.

Hardware deserves a stricter audit than software for four reasons:

- High capital intensity: hardware development demands meaningful spend on tooling (Altium or KiCad CAD, ANSYS SIwave simulation, EMC pre-scan), prototyping and certification well before first revenue.

- Pivot is expensive: unlike software, a hardware architecture change typically forces a return to the schematic, with 3 to 6 additional months per major respin.

- Invisible technical debt: a working prototype can hide choices that are incompatible with series production or with electromagnetic compatibility limits (EN 55032 Class B caps emissions at 40 dBuV/m at 3 m between 30-230 MHz).

- Certification timelines are routinely under-estimated: a complete CE/RED cycle takes 3 to 6 months (notified lab plus iterations), and 12 to 24 months for a Class IIa medical device under IEC 60601-1 and ISO 13485.

| Risk type | Consequence | Investment impact |

|---|---|---|

| Feasibility overstated | Full redesign required | Forced pivot or write-off |

| Inadequate technical team | Major delays and overruns | Significant budget overshoot |

| Industrial scalability ignored | Production bottleneck or recall | Multiplied correction costs |

| Regulatory compliance overlooked | Time-to-market blocked | Major redesign and commercial delay |

To go deeper on risk identification and mitigation, see our companion guide on writing a specification that gets shipped and our electronic design house methodology.

A 5-pillar evaluation framework

Our methodology rests on five complementary pillars covering the critical dimensions of a hardware project: feasibility, team, timing, scalability and compliance. The framework relies on the ISO 16290 TRL standard, IEC 62443 for industrial cybersecurity and ISO 9001 for process quality, and structures the analysis so that no critical aspect is missed when an investor is on a tight DD clock.

Pillar 1: Technological feasibility

Evaluation starts by placing the product on the TRL 1-9 scale (Technology Readiness Level), a framework originally formalised by NASA and ratified in Europe by ISO 16290:

- TRL 1-3: fundamental research and proof of concept. Very high risk for an early-stage investor.

- TRL 4-5: laboratory validation. The product runs at 25 degrees C ambient on the bench, but performance under industrial conditions (-40 degrees C to +85 degrees C) is still to be demonstrated.

- TRL 6-7: demonstration in a representative environment. The prototype is functional, but industrialisation is not yet proven.

- TRL 8-9: qualified, operational system. Technical risk is contained; the focus shifts to scalability and MTBF (typical industrial target: 50,000 to 100,000 hours).

Critical red flags:

- Demonstration only in a controlled lab, never in real conditions (temperature, vibration, EMC).

- No functional prototype after more than 12 months of development.

- Team that dodges precise technical questions or answers vaguely.

- Performance claims without reproducible measurements or test documentation.

- A 2-level or larger gap between claimed TRL and observed TRL.

Green flags:

- Functional prototypes tested in representative conditions (HALT, thermal cycling -40 degrees C to +85 degrees C, vibration up to 50 Grms).

- Detailed, coherent and current technical documentation (schematic under PLM configuration management).

- Preliminary validation tests with measurable results (internal EMC pre-scan report compliant with IEC 61000-4-x).

- Realistic technical roadmap with clearly defined EVT/DVT/PVT milestones.

- Honest identification of remaining technical challenges and their workarounds.

Our guide on design for manufacturing details the critical steps of the prototype-to-series transition.

Pillar 2: Technical team assessment

Team quality is often the deciding factor between hardware success and hardware failure. We systematically evaluate the presence and competence of four key roles. Despite many founder claims, a brilliant CTO without an industrialisation lead is not a team, it is a single point of failure.

| Key role | Expected experience | Warning signal | Positive signal |

|---|---|---|---|

| CTO / Lead hardware | Has shipped similar products to volume | Purely academic background, no industrial launch | Successful industrial launches, exposure to series constraints |

| Firmware engineer | Strong embedded and real-time skills | No experience with constrained real-time targets | Optimisation, advanced debugging, certification experience |

| Industrialisation lead | Supply chain and ramp-up experience | Missing from team, "later in the project" | Established supplier relationships, DFM experience |

| Regulatory expert | Successful CE/FCC certifications on prior products | Compliance pushed to "end of project" | Embedded from the design phase |

Discriminating technical questions:

- How do you manage thermal dissipation on your main SoC?

- What are your high-speed routing constraints and how do you address them?

- How do you ensure electromagnetic compatibility of the product?

- What is your test plan for industrial qualification, including product testing and validation?

- Describe your hardware configuration management and versioning process.

The answers to those questions reveal the real competence level of the team in minutes. A reliable team answers with precision and transparency. A fragile team stays vague or defers to external consultants.

Pillar 3: Market timing vs technical maturity

Synchronisation between market window and technical maturity is a critical and often under-estimated factor. Four dimensions to evaluate, with named references where applicable:

- Window of opportunity: is the target market still accessible or already saturating? Is the entry timing coherent with the development calendar?

- Realistic development time: teams routinely under-estimate timelines. Sector reference points: 4 to 6 months for a simple IoT sensor, 9 to 14 months for an industrial product under RED, 18 to 24 months for a Class IIa medical device.

- Component obsolescence risk: will the chosen parts still be available in 3 to 5 years? What share of the BOM is flagged NRND or End of Life by SiliconExpert or Z2Data? More than 10 percent on critical references is a red flag.

- Regulatory evolution: can new standards impact development? The Cyber Resilience Act (CRA, EU regulation 2024/2847), enforceable in late 2027, changes the game for any connected product. According to ENISA and the NIS2 directive, every product must ship a Software Bill of Materials (SBOM) in CycloneDX or SPDX format with documented vulnerability handling (CVE) via a Coordinated Vulnerability Disclosure (CVD) process. On a recent project we measured that a complete CycloneDX SBOM on a Cortex-M4 MCU lists 140 to 220 software components across BSP, crypto libraries and network stack.

We regularly see teams plan a launch in months when the RED certification cycle alone takes 3 to 6 months at the notified lab plus iterations. The gap between estimate and reality often justifies a valuation adjustment.

Pillar 4: Industrial scalability

Moving from prototype to volume production is one of the most under-estimated challenges hardware startups face. Our analysis covers three axes, every one of which we cross-check against named tooling and standards.

- DFM analysis (Design for Manufacturing): is the design compatible with volume processes? Are tolerances realistic (class IPC-2221, acceptability IPC-A-610 Class 2 for industrial, Class 3 for medical or aerospace)?

- Supply chain assessment: are critical parts available from at least two suppliers (multi-source)? Are pin-compatible alternatives documented in case of shortage? Are lead times compatible with the schedule (typically under 26 weeks for a volume MCU)?

- BOM risk: is the bill of materials stabilised? Second-source coverage above 80 percent, NRND/EOL share below 5 percent, single-source share identified. We score availability via Octopart or Findchips and lifecycle status via SiliconExpert or Z2Data.

What most people miss is that a perfect prototype can still be impossible to produce at volume at a cost that fits the business model. That is one of the most frequent traps we identify.

Pillar 5: Regulatory compliance

Regulatory compliance is a non-negotiable prerequisite for market entry. Requirements vary by sector and a serious DD must instrument them all. According to NIST and ETSI, the gap between "conformity claimed" and "conformity demonstrated" is widening with every new connected-product regulation.

- European market: CE marking, RED 2014/53/EU for radio equipment, EMC directive 2014/30/EU, LVD directive 2014/35/EU, RoHS 2011/65/EU. For connected IoT: ETSI EN 303 645 and the Cyber Resilience Act (EU regulation 2024/2847, enforceable December 2027). Reference texts on IEC and ISO 27001 for information security management.

- North American market: FCC Part 15 for radio emissions, UL certifications for electrical safety.

- Medical sector: IEC 60601-1 for safety, IEC 62304 for software, MDR 2017/745 in Europe, FDA 510(k) in the US, ISO 14971 for risk management, ISO 13485 for the QMS.

- Automotive sector: ISO 26262 for functional safety (ASIL-A through ASIL-D), AEC-Q100/Q200 qualifications for components, thermal range -40 degrees C to +125 degrees C grade 1.

- Industrial sector: IEC 61508 for functional safety (SIL 1 through 4), IEC 62443 for OT cybersecurity, see our industrial IoT cybersecurity guide.

The absence of regulatory anticipation is one of the most common risks we observe. A team that has not internalised CE/RED constraints from the design phase will rework architectural choices, with 3 to 6 months of typical delay.

The classic technical pitfalls

A hardware DD pitfall is a recurring pattern that hides execution risk under a convincing demo. These pitfalls demand physical inspection, schematic review and measurements, because documentation alone cannot resolve them. According to McKinsey, three families concentrate most observed cases: a perfect demo that does not reproduce, emerging technologies without an ecosystem, and dependency on a single non-documented expertise.

The perfect-demo pitfall

Symptom: everything works perfectly in demo, but only in tightly controlled conditions.

Reality: the path from lab to industrial product is often the largest share of the work that remains. A prototype that hums on the engineer's bench and a product that runs reliably at the customer site are fundamentally different artefacts. Despite a polished demo, the gap can swallow 6 to 12 months of additional development.

How to detect this pitfall:

- Request tests in degraded conditions (temperature, vibration, unstable supply).

- Check thermal margin across the targeted operating range.

- Test behaviour in the presence of electromagnetic interference.

- Validate component lifetime and reliability margins.

- Observe the product running for hours, not minutes.

The emerging-technology pitfall

Symptom: the team bets on very recent components or technologies, presented as a major competitive advantage.

Reality: emerging technologies bring sourcing, support and maturity risks that can compromise viability. Contrary to founder narrative, "newest" is rarely a moat in hardware.

Evaluation points:

- Guaranteed component availability over the product life cycle.

- Mature development ecosystem with complete documentation.

- Responsive vendor support with a long-term commitment.

- Existence of a plan B with qualified alternative components.

- Verification that the chosen technology is genuinely needed and not just trend-driven.

The single-expertise pitfall

Symptom: the product hinges on the expertise of one key person, usually the CTO or a senior engineer.

Reality: that dependency is a major paralysis risk in case of departure, illness or disagreement. Every investor should weigh this factor carefully.

Mitigations to verify:

- Complete and current technical documentation.

- Cross-training of team members on critical skills.

- Identified and reachable external technical advisers.

- Reproducible, documented development process.

- Option to engage an external electronic design house if needed.

Our hardware DD process

A hardware due diligence audit is a structured engagement that combines documentation analysis, technical interviews, physical prototype inspection and industrial assessment. Our process follows five progressive steps, with each step refining the risk and opportunity picture. The sequence adapts to the investment context, the sector, project maturity and the specific stakes identified. According to Accenture and Boston Consulting, this sequence must start with documentation and end with the investment report, never the inverse.

| Step | Activity | Deliverables |

|---|---|---|

| Documentation review | Technical specifications, IP estate and roadmap | Preliminary synthesis note |

| Technical interviews | In-depth sessions with each key technical team member | Skills and coherence assessment |

| Hardware assessment | Physical prototype examination and independent functional tests | Design quality report |

| Industrial analysis | Supply chain, DFM and regulatory compliance evaluation | Scalability projection |

| Investment report | Synthesis of risks and opportunities | Recommendations and mitigation plan |

Each step builds on the conclusions of the previous one, which lets us adjust the analysis in real time and concentrate effort on the most material risk points. We also examine the product specification early, since its quality is one of the strongest predictors of project maturity.

In-house lab capability: Tektronix TekExpress

Our hardware DD lab is built around a Tektronix oscilloscope equipped with the Tektronix TekExpress compliance suite, which runs automated compliance tests for PCI Express, USB 3.x, MIPI, DDR2 / DDR3 / DDR4, HDMI, Ethernet and LVDS. In our practice this capability lets us pre-qualify high-speed designs in-house during a DD: we open the schematic and the stack-up, then bring the prototype on the bench and run an actual signal-integrity audit instead of relying on the team's own measurements. Few independent design houses of our size keep that capability internal, and on a hardware DD it converts a paper claim ("PCIe Gen3 compliant") into a measured eye diagram with documented mask margin. See our PCB stack-up and impedance reference for the underlying methodology.

10 discriminating questions for an investor

A discriminating question in a hardware DD is a factual prompt that demands a measurable, documented and reproducible answer. The ten below form the spine of any hardware evaluation. They surface the real maturity of the project, the depth of the team, and the risks the deck quietly omits. According to FTI Consulting, a reliable team answers in numbers; a fragile team answers in adjectives.

Technical feasibility

1. "Show me your product running in real conditions, not in the lab."

2. "What are the three unresolved technical challenges that could sink the product?"

3. "How does your solution compare technically to your direct competitors?"

Team and skills

4. "Who on your team has already taken a hardware product from idea to commercial success?"

5. "How do you handle regulatory aspects (CE, FCC, sector-specific norms)?"

6. "What is your plan if your CTO or key engineer leaves tomorrow?"

Industrialisation and supply chain

7. "How does your unit cost evolve between prototype and series production?"

8. "Who are your critical suppliers and what backup plan do you have for each?"

9. "What is the realistic delay between end of development and first customer shipments?"

Intellectual property

10. "Which patents do you own outright and which licences do you depend on from third parties?"

Answers must be precise, documented and coherent. Vague replies, systematic deferrals to external consultants, or promises without proof are all warning signals.

Field reports and patterns we keep seeing

A hardware DD field report is a recurring observation that signals execution risk on a given project type. These observations, drawn from our practice, are valuable reference points for any investor facing an electronics deal. According to Ernst and Young, the most predictive patterns concern the claim/observe TRL gap, certification timelines and single-source dependency on the key part. In our practice we have observed all three on more than one engagement.

At AESTECHNO, we have observed that the gap between the claimed TRL and the real one is among the most frequent risks we report. It is common for a team to declare itself at TRL 7 (operational-environment demo) when our assessment puts it at TRL 4 to 5 (lab validation). The gap is rarely intentional. It typically reflects a misunderstanding of what real industrialisation demands.

In our practice, certification timelines are also a recurring surprise. Teams that have not anticipated the constraints of CE/RED certification or sector-specific norms discover late that the path to market is much longer than budgeted. Embedding a regulatory expert from the design phase prevents that costly surprise.

We have also observed that single-source dependency is a frequent trap. A team that builds a product around a part available from only one supplier exposes itself to a major sourcing risk, particularly in the current shortage context. We recommend systematically verifying the existence of alternative sources for every critical part. In our practice across hardware DD engagements, that single check has changed the verdict on multiple files.

Finally, at AESTECHNO we insist on assessing not only the product but the team's ability to execute. A technically ambitious project carried by an experienced and well-structured team has a fundamentally different risk profile from the same project carried by a team with no industrial track record.

Field cases: 3 red-flag patterns we keep finding

- Case 1: TRL over-claim. The team announces TRL 7 (operational-environment demo per the NASA / ISO 16290 framework) while inspection of the PCB, the firmware and the test record reveals TRL 4-5. Contrary to the common assumption that the founder is bluffing, the gap is often unintentional. An objective grid is needed to settle it.

- Case 2: missing certification path. No documented plan for CE, RED (2014/53/EU) or FCC Part 15, no EMC pre-compliance, no notified lab identified. On regulated products (medical IEC 60601, automotive ISO 26262, industrial IEC 61508), this gap represents 6 to 18 months of hidden delay.

- Case 3: single-source dependency. BOM built around an MCU or SoC with no documented pin-compatible alternative. A quick check on Octopart or SiliconExpert exposes the rupture and obsolescence risk in minutes.

A typical hardware DD field report from our practice

On a recent DD engagement we audited a Series A connected-sensor target and measured that 18 of 20 critical BOM lines were single-source. Our measurement methodology stays consistent on every hardware DD: step 1 runs signal-integrity and protocol compliance on Tektronix TekExpress (PCIe / USB / MIPI / DDR / HDMI / Ethernet), step 2 audits the BOM and supplier coverage on Octopart and SiliconExpert, and step 3 reviews the certification trail (CE, RED, FCC, ETSI EN 303 645). Contrary to the common assumption that "single-source on an MCU is acceptable for a Series A", we found that the lead time on the chosen part had stretched to 38 weeks, and the field report from the customer's integration team confirmed the diagnosis. In our practice across hardware DD engagements, we have observed this pattern repeatedly. Despite the team's polished demo, we recommend a suspensive condition on a documented second source before the term-sheet release of the next tranche.

Tools and standards used in our audits

Our hardware DD process relies on named references and proven tools. On the methodology side: the TRL 1-9 grid (NASA / ISO 16290), IPC-A-610 Class 3 for assembly acceptability on critical products, IPC-6012 Class 2/3 for PCB quality, sector-specific norms (IEC 62443-4-2 for industrial cybersecurity, IEC 62304 for medical software, ETSI EN 303 645 for consumer-IoT cybersecurity, ISO 14971 for medical risk management). According to Bureau Veritas, SGS and Intertek, third-party EMC pre-compliance halves the risk of failure at the notified lab. On the tools side: schematic-review checklists, BOM analysis via Octopart and Findchips, obsolescence scoring via SiliconExpert or Z2Data, stack-up audit and impedance control by simulation (ANSYS SIwave), and signal-integrity compliance via Tektronix TekExpress. For French Tech investment files, the Bpifrance guides usefully complement this grid. The combination delivers a defensible verdict in 5 to 10 days of audit. According to the AICPA's audit-quality guidance, a documented test procedure is the single best defence against audit drift.

Contrary to a purely financial DD

Contrary to a purely financial due diligence, hardware red flags rarely surface in the pitch deck. A balance sheet can be parsed by an analyst in hours; a silicon obsolescence risk, a PCB stack-up that will fail EMC, or a firmware that will not hold in production demand a senior engineer, a schematic and measurements. In our practice, investors who run financial DD and technical DD before the term sheet save several months of post-closing pain. The cost of a hardware DD is a fraction of the entry ticket, and its value is asymmetric: it can avoid a written-off investment.

How long does a hardware DD take?

The duration of a hardware DD depends on product complexity and required depth. In our practice, a flash audit (seed / pre-seed) runs in 3 to 5 working days and covers TRL, team, BOM and regulatory trajectory. A deep audit (Series A/B) typically runs 5 to 10 working days and adds schematic and stack-up review, EMC pre-scan measurements on available prototypes, BOM obsolescence analysis on SiliconExpert or Z2Data, and a firmware-process review (CI/CD, hardware-in-the-loop tests). According to Deloitte, the combined depth halves post-closing surprises.

Contrary to a software audit where everything is decryptable remotely from a Git repository, a hardware DD usually demands an on-site session of 1 to 2 days for physical prototype inspection, degraded-condition tests and interviews with the key engineers. That step is decisive. It is by watching a product run for 4 hours straight under thermal cycling that one detects fragilities no documentation reveals. In our Montpellier lab we have tested under -40 degrees C / +85 degrees C cycling several dozen investment prototypes and we have observed that roughly one third show an undocumented functional drift, often linked to power regulation or component tolerances. Field report from a 2025 Series A DD: the prototype worked perfectly at 25 degrees C ambient but lost its LoRaWAN link above +70 degrees C, a finding that would have cost 4 months of respin had it not been caught early.

Bottom line: 5 pillars, 1 verdict, 0 nasty surprises

A well-run hardware DD covers five pillars in parallel, namely TRL feasibility, team, market timing, industrial scalability and regulatory compliance. It uses quantified references (TRL 1-9 per ISO 16290, IPC-A-610 Class 2/3, second-source coverage above 80 percent, obsolescence below 5 percent, MTBF above 50,000 hours), named tools (Octopart, SiliconExpert, ANSYS SIwave, Tektronix TekExpress) and a physical inspection of prototypes. It is never just a documentation read.

- TRL claim/observe gap of 2 levels or more is the most predictive single signal in our DD practice; demand a controlled-condition demo before accepting any TRL claim.

- A documented CE/RED/CRA path with an identified notified lab is non-negotiable; missing it is the most expensive omission we report.

- Second-source coverage above 80 percent and NRND below 10 percent on critical parts; single-source on a key MCU triggers a suspensive condition by default.

- Four key roles covered (hardware CTO, firmware engineer, industrialisation lead, regulatory expert); the absence of an industrialisation lead is one of the recurring red flags we identify.

- An audit of 5 to 10 days is a fraction of the entry ticket and routinely triggers a valuation adjustment, an added suspensive condition, or a no-go. Its value is asymmetric, and that is what makes it indispensable.

Hardware investment audit? AESTECHNO expertise

Are you evaluating a hardware investment? Our independent technical audit identifies the risks and the upside:

- Technological feasibility analysis (TRL grid).

- Team and skills assessment.

- Industrial scalability projection (BOM, DFM, ramp).

- Regulatory red-flag identification (CE, RED, CRA).

Why choose AESTECHNO for your hardware DD?

- 10+ years of experience in electronic design and technical evaluation.

- 100% success rate on CE/FCC certifications across our audited and shipped projects.

- 65 projects delivered since 2022, including hardware DD engagements for early- and growth-stage funds.

- Reference standards mastered: ISO 16290 (TRL), IEC 62443, ETSI EN 303 645, IPC-A-610.

- French electronic design house based in Montpellier, with on-site interviews available within 24-48 hours.

Article written by Hugues Orgitello, electronic design engineer and founder of AESTECHNO. LinkedIn profile.

Related articles

- Electronic design house methodology: our 6-step EVT/DVT/PVT framework.

- Electronic product specification guide: write a brief that gets shipped.

- Design for Manufacturing: identify industrialisation risks from the design phase.

- PCB design, stack-up and impedance: the engineering reference for high-speed boards.

- CE/RED certification for IoT products: anticipate the regulatory cycle.

- Industrial IoT cybersecurity: IEC 62443 and CRA-aligned design.

- Electronic product testing and validation: HALT, ESS and qualification.

- All AESTECHNO articles.

FAQ: hardware technical due diligence

Why is a technical audit necessary before investing in hardware?

An independent technical audit lets you assess the real maturity of a hardware project, beyond the pitch deck. Electronic products carry physical, regulatory and industrial constraints that are not always visible to a non-specialist investor. The audit surfaces hidden technical risks, validates team claims, and provides a factual basis for the investment decision.

When should the technical audit happen in the investment process?

The technical audit should run after the preliminary financial analysis but before the term sheet is signed. That is the optimal moment to identify technical risks that may impact valuation and to negotiate investment terms accordingly. Running the audit too late means discovering problems after the financial commitment.

What happens if the audit reveals major technical problems?

The audit report systematically includes adapted recommendations: valuation adjustment, suspensive conditions tied to technical milestones, roadmap restructuring, or in the most critical cases a no-investment recommendation. The goal is not to block the deal but to give the investor the elements for an informed decision and for negotiating conditions appropriate to the real risk level.

How is confidentiality guaranteed during the audit?

We systematically sign confidentiality agreements covering all parties (investor, startup, AESTECHNO). Our team is used to handling sensitive information and we run secure processes for analysing intellectual property and confidential technical data. Information protection is a non-negotiable prerequisite of any audit engagement.

Can the audit reveal upside that was not identified?

Yes, that is a frequently under-rated benefit of a technical audit. Our analyses sometimes reveal technical potential that the team did not put forward: developed-but-uncommercialised technologies, product-line diversification options, or competitive advantages absent from the pitch. These findings can strengthen the investment thesis and justify a more favourable positioning.